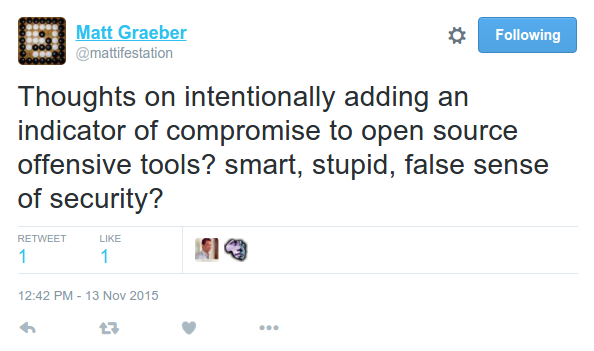

Recently, there was a question posed on Twitter from @mattifestation. I tried to reply at the time, but got called away to a meeting. That said, I feel like there is a lot more to be said about this topic and it was more than I could put down in a simple Twitter post.

Problem We’re Solving

The first thing to consider is what problem is this trying to solve? I can’t (and won’t) speak for Matt, but my understanding is that this is intended to solve the problem of script kiddies using open source security tools for actual compromises of organizations in the real world.

The state of security tools over the last few years has exploded into a ton of amazing capabilities, enabling consultants to demonstrate near-nation-state level capabilities against client networks. This is fantastically good for the industry, but also for the bad guys. We also run into the issue of clients getting angry with their antivirus companies when antivirus doesn’t detect commodity pentest tools. That’s an understandable concern, but like all dual use technology the harm comes from the user, not the tool.

So understanding that bad guys will use our tools, will purposefully adding signatures into them make us safer? Will antivirus companies develop signatures to look for your “gimme” IOCs or will they develop signatures to look for real IOCs?

Tripping Up Careless People

In order to ensure that your tools continue to stay relevant for the good guys, it has to be easy to remove the signature. After all, if you’re asking me to spend the time to modify your tool before using it, I’d better make sure that I use it and get value out of it. Similarly, if I have to remove a signature and it still gets caught, I’m going to be upset.

Why is that? Because instead of wondering whether or not the AV was good enough to detect the tool, now I have to wonder whether or not your “Remove Signature” function worked. And I don’t have time to troubleshoot your code. Then again, we’ve also got AV products that scan files on disk for files literally called “Invoke-Mimikatz.ps1” and signature on that fact alone, so it’s not like there’s a high bar to overcome on that side either.

At the same time, the notification that “Hey, this tool has a signature and here’s how to remove it” needs to be readily apparent to new users of the tool. If I’m talking with another consultant and recommend that “You should check out $TOOLX, it’s really awesome and you’ll benefit from it”, I don’t want to have to remember to tell them that it has a signature. Likewise, when they pick it up to use it, they should be able to quickly note that it has a signature, remove it, and profit.

So we’re expecting that the bad guys aren’t going to be able to do this exact same thing? That’s our great hope? That the script kiddies aren’t going to notice that we put signatures into the tools?

Harming Consultants

And this is where it gets personal for me. I’m in consulting and I work with a team of other folks who all travel around to different clients all year long. Let’s just make up some numbers. We’ll assume that I work with 10 people (inclusive) and that each person on the team deals with 15 clients per year. We use full disk encryption on our laptops and wipe-and-reimage every time we finish engagements, to ensure that client data never goes anywhere else.

In an average year, that’s 150 times (at minimum) that our team is reinstalling our assessment laptop. And yes, we’ve got build scripts to help automate it and lay down the particular tools that we use. But what happens if something changes in a tool, if a developer decides to change how a tool is signatured? Exactly what mechanisms are they going to build the signature in? Will it be a simple sed command from the command line, will I have to recompile binaries?

Can I guarantee that out of those 150 times my team is rebuilding assessment laptops, that everything will work fine? That if there are any manual steps, people will remember them? Can I trust that my junior consultant is going to remember to validate that his tools are all de-sigged before going on-site to a client? Or will I make a mistake? I certainly do at times, and I’d hate to screw up on a client site because I didn’t validate that a particular tool added a signature since the last time I checked on it.

Barrier To Entry

The INFOSEC community is the subject of a lot of discussions about how hard it is to enter, how many vacancies there are, and what skillsets are required to get your foot into the door. We constantly get asked how to be a hacker. What are the different jobs and what skills and responsibilities does each have?

While understanding that we don’t want to hire people who are so negligent that they cause harm to the systems they test, we want it to be as easy as possible to onboard people into the INFOSEC community and help them be productive. We can teach people with basic networking understanding how vulnerability assessments work and, as it starts to click, we can start walking them through the toolchain to more complex attack scenarios.

I believe (and I have a B.S. in Computer Science so I’m biased) that having a background in programming is immensely valuable for INFOSEC professionals (whether on the offensive or defensive side). However, I don’t think it needs to be a requirement for getting started in the field. By the time you’re reaching mid-level career, you should definitely know at least one programming language, but certainly not all.

And yet let’s look at the list of tools that an offensive consultant might need to utilize. We’ve got Ruby, Python, PowerShell, C, etc. Just recently, I had to dig through some Objective-C to look at some exploit code before I ran it on a customer network. Do we really expect that new people to the industry (still trying to learn which tools are used for which purposes, methodology, etc.) to be able to read through and understand the different programming languages well enough to safely remove the signatures?

Or how about just the psychological signal we’re sending to novices when we talk about weeding them out if they can’t read a README (among all of the other responsibilities they have)?

Open Source and Choice

Obviously, I can’t force tool developers to do anything they don’t want to. The simple fact that so many talented individuals are writing tools and releasing them for free use by the community is an incredible act of generosity and, I believe, really helps to measurably increase the security of networked systems. However, I’d caution them not to purposefully build in “idiot checks” or easily-disabled IOCs, because I think the harm far outweighs any benefit.

In my personal opinion, developers who make the choice to have their tools with these easy-IOCs risk losing “market share” to developers who build tools without these signatures. In the nature of open source, there’s nothing stopping people from forking these tools pre-IOC and distributing and using that instead. To me, however, it just seems like an adversarial relationship when people need to be undermining the efforts of tool developers on a regular basis and I’d certainly prefer that’s not the direction the community went.