I had an interesting event happen to me recently - I had a client call and tell me they’d gotten a penetration test recently but they needed someone to help make sense of the report and prioritize it for their organization. This is a client I personally hadn’t worked with before and we’d only done some unrelated work with, but they knew we did similar offerings and trusted us. The worst part about this is that the consultant had done a fairly good job and had what could have been high impact findings, except that they flubbed the report pretty hard. Why does this matter? Because of something I tell all of our new guys on the team:

No matter how much fun you have during the engagement, the only thing that matters is the report.

Why is that? Because of exactly situations like this. Do you think this client is going back to this other consulting firm the next time they want penetration testing on their network? Or do you think they’ll approach someone else (whether my firm or a third party) instead? That’s dollars out of your pocket because of your report quality. That’s deciding whether or not you can buy additional tools, whether you can hire that extra guy, or whether you can make that mortgage payment.

As a result, there are a few things that I saw wrong in this report that I feel like the industry could learn from.

Lack of Focus on Client

This is a big deal that I’ve seen countless people mess up. In the quest to give perfect, easily repeatable security guidance no matter what your client is, you give guidance that the client cannot follow (for any number of legitimate reasons). As such, I encourage people to talk over the recommendations with our clients and determine the feasibility of implementation. If a customer has a strong reason of why they can’t do $SOMETHING, we see if we can come up with an alternate recommendation - even if it’s less secure - that they can do.

That’s not to say that we just shrug and leave it at that - we of course make a note that we’d prefer they implement X, but due to $REASONS and in consultation with the client, we believe that Y is an alternate recommendation that they can implement that’s not as good as X, but better than doing nothing. By ignoring what your client can’t or won’t change about their network, you risk having your entire recommendation discarded. In its place, the client will either ignore the finding (bad!) or substitute whatever they’d rather do into your report (even if it’s something you disagree with). I’ve found the overwhelming majority of clients are very happy when a consultant is willing to work with them to understand what recommendations would work best on their network instead of on the Platonic ideal of networks. You also gain enormous credibility with a client if you can honestly say you’ve implemented a given recommendation and here are some of the things to watch for in the process.

Writing To The Wrong Audience

A report typically has many different parts, but generally people will agree that there is an executive summary and a list of technical findings. There may be other parts, but those two are generally pretty constant. Picking up a report, I can tell within the first paragraph or two of the executive summary whether or not the report will have value to a client organization. How? Because of how you write it.

I’ve seen countless reports where the author has made the claim “It’s okay, the client is technical. I can include this stuff in the executive summary.” 99% of the time, you’re all wrong. The client would like to forward your report along to their management (who isn’t technical) in order to help demonstrate the impact of the engagement - except they can’t, because you filled it so full of technical jargon, obscure recommendations, and unnecessary details that your client doesn’t feel comfortable passing it up the chain.

The ideal executive summary (in my own opinion, which you’re all going to disagree with) should be written at a very high level and tailored to a non-technical audience. IP addresses or hostnames should never appear in it, nor should anything with a crazy acronym. If you can’t give your report to your favorite non-technical person and ask them to summarize the key issues on the network, you’ve done it wrong. Writing in such a way that anyone can pick up the report, read the executive summary, and get an understanding of “Oh, these are bad, we should fix those” allows your report to travel much further around the client’s organization than it otherwise might have, resulting in increased impact and associating your name with quality reporting.

Overemphasis on Tool Output

I get it, right? We all run various tools during our engagements, especially if the client wants something like a vulnerability analysis scan done. But we also need to understand the more important thing: how do our customers use our reports?

Do they really need a $20,000 paperweight and a few dead trees? Does that extra few hundred pages really drive home the point that their patching policy is terrible, their passwords are being transmitted in the clear, and you just stole a billion dollars worth of intellectual property? Or is it more likely that the customer is going to pick up the report, read the first dozen pages, and throw away the rest?

I was on the receiving end of an assessment when I used to do full-time network defense. The final report that we got was about 800 pages and included things like every missing patch across every system on the network. Pages upon pages of things like “MS08-067 is missing from 10.1.2.3, 10.1.2.4, and 10.1.2.5.” Was I ever going to go around host-to-host and fix the patches in that way? Of course not. That would be stupid.

Instead, I was going to go find my patch management system and determine exactly why those systems didn’t get the patches. The hundreds of pages (and countless hours of analysis, reporting, and editing) that went into providing those other details was an absolute waste of everyone’s time. I can honestly say that not a single person at our organization read the entire 800+ pages of the report. Even adding up everyone who did look at it, there were hundreds of pages that no one ever read.

As a result, it’s best to write the findings in such a way that the client will be able to extract value from them. Do they care about every missing patch on every host? Chances are, no. But do they care that they’re missing 3,000 patches across their network, some dating back as far as 2009? Yes, I’d bet they do. It’s all about how you write the findings and how the client plans on leveraging that information.

Meaningless Graphs

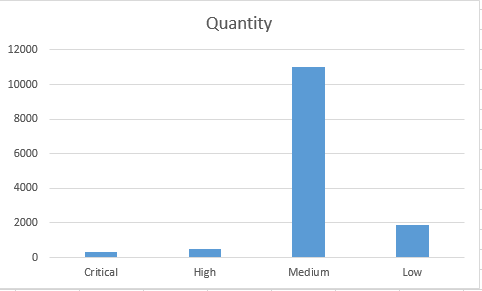

Very closely related to the above point is our profession’s love for meaningless graphs. The report that our client received had some great graphs that provided zero value, because they were driven by data that nobody cared about. For example, this consulting firm counted each host that had a vulnerability as an additional item when coming up with the total count of vulnerabilities on their network. What’s that look like in a graph?

If I’m a client, what am I supposed to take away from that graph? I guess I have a lot of Medium priority vulnerabilities. Over 10,000 of them, in fact. That seems… bad? Maybe? I mean, at least I don’t have that many criticals or highs, but is that a lot? Are they easy to fix or hard? Will it take me a lot of time? Normally, a graph is supposed to give you a nice way to visualize data in a meaningful fashion. This graph does none of those. It only takes up space and creates additional questions that the rest of the report fails to answer.

Not Protecting Sensitive Information

Finally, we stumble across this mess. I completely understand (and agree with) the desire to show high-impact findings in a report using screenshots or terminal logs from the exploited systems. If you gain access to something that makes people’s jaws drop with how bad it is, by including that in your report the client is that much more likely to ensure it gets fixed. However, we need to make sure that we’re still protecting data.

Just because the client is (currently) doing a terrible job at protecting sensitive data doesn’t give you a free pass from protecting it as well. If you’re going to screenshot PII/PHI, you have a moral (if not legal) obligation to redact information in your report. Leave just enough that the client can recognize where it was and that it’s real, but without actually allowing any harm to come to the affected individuals if your report were made public.

Similarly, this is a report of how you just compromised their network! You don’t think that a bad guy would like to read that report, maybe get some ideas so they could break in without having to duplicate effort? Encrypt your report before you send it over to the client. Sending unprotected PDF files containing privacy-sensitive information and exploit details across the Internet is just asking for trouble.

Conclusion

Just like your executive summary needs to end with a conclusion to wrap up your key findings, so does this blog post. Reporting is a big deal - it’s what the customer pays for. In an industry where we brag about psychological manipulation and fooling people into doing things for us, we can’t write reports to save our lives. Clients who read our reports take notice of when people do good (and bad) jobs - and spend their money accordingly. Out of all of the reasons to lose business, “because I write unreadable reports” is a pretty terrible one.